I gathered a few of the comments made by participants of my lecture “Why quantum computers cannot work and how”, and a few of my answers. Here they are along with some of the lecture’s slides. Here is the link for the full presentation.

1) Getting started

Aram Harrow: Introduces me, mentions our Internet debate and that the day of the lecture was our first meeting in person, mentions that he (still :)) does not agree with me.

Aram Harrow: Introduces me, mentions our Internet debate and that the day of the lecture was our first meeting in person, mentions that he (still :)) does not agree with me.

2) Special-purpose devices and the “trivial flaw”

(slides 4; 10/11).

Peter Shor: But there are various examples of classically controlled quantum evolutions

Peter Shor: But there are various examples of classically controlled quantum evolutions

Scott Aaronson: So your critique goes only against universal quantum devices and not against computationally superior special-purpose devices? (Answer: no)

Scott Aaronson: So your critique goes only against universal quantum devices and not against computationally superior special-purpose devices? (Answer: no)

3) Topological quantum computing

Why topological quantum computing cannot shortcut the need for ”traditional” quantum fault tolerance (slides 16-18).

Aaronson: Such an argument can be made against every yet unavailable technology.

Mary Beth Ruskai: Agree(?) regarding topological quantum computers, but not that the argument has anything to do with cluster state computation. Several other people expressed the belief that cluster-state computation is not in conflict with my conjectural view of noisy quantum systems.

Mary Beth Ruskai: Agree(?) regarding topological quantum computers, but not that the argument has anything to do with cluster state computation. Several other people expressed the belief that cluster-state computation is not in conflict with my conjectural view of noisy quantum systems.

Shor: Do you disbelieve in the fractional quantum hall effect, then?

(Answer: No, just not in the possibility to create highly stable qubits based on anyons. Mixture of different codewords representing a topological state is OK.)

4) My conjectures 1-4

(slides 29-35):

AM1 (audience member 1): Conjectures 1-3 (but perhaps not 4) are not in conflict with quantum fault-tolerance and the threshold theorem. (Answer: I dont think so; this was discussed in the debate.)

Ruskai: The two-qubit conjecture is too weak to cause QFT to fail. (Answer: indeed I strengthen the conjecture two slides ahead, but I am not sure that we meant the same thing.)

5) Sure/Shor separator

(slide 35):

Aaronson: Constant depth quantum computing still allows certain quantum advantages over classical computing.

Aram: also Shor’s algorithm can be implemented with rapid classical control.

(Answer: we will attend to it in due time 🙂 )

6) Smoothed Lindblad evolutions

And my conjecture that realistic quantum systems are well approximated by smoothed Lindblad evolutions (briefly: SLE). (Slides 36-39.)

Shor: You get something different (by smoothing) when you take time intervals [0,1/2] and [1/2,1]; It makes no sense that the conjecture applies in all scales; You need scales and you need units (I did not get the last point.)

Ruskai: What do you mean by “approximate?” (Answer: excellent question.)

Shor: What about spin echo (NMR)?

(A comment I made in response: actually, Aram raised NMR among several other points “against” SLEs a few days before the lecture; we will have to look at them. Since my smoothing just reorganizes the noise, I expect that it will have little effect for quantum systems that do not enact quantum fault-tolerance.)

AM2: Can you prove that SLE do not support FTQC? (Answer: no) AM2: So what are we talking about here?

Why smoothed Lindblad evolutions are still Lindblad. (Thanks to Robert Alicki.)

7) Causality

(slides 40/1/2)

AM3: My explanation resembles something in classical mechanics which naively appears to contradict causality (the principle of least action, perhaps); Aaronson: Ironically, this causality paradox from classical physics is explained using quantum mechanics. (AM3 made other good comments that I don’t remember.)

8) Simulating physics

(Slides 51/52)

Ruskai : But what does “simulate” mean? (Answer: Excellent question! But if I get to that it will not be a short answer, and probably the “yes” will be modified.)

9) A few additional remarks and questions

Alex Arkhipov: Do my conjectures forbid certain states or only some evolutions (Answer: States are also restricted but this requires the setting of noisy quantum computers: local operations on a Hilbert space with tensor product structure.)

Seth Lloyd: Are there experimentalists in the room? (Apparently not.) The lecture seems remote from what experimentalists care about. Lloyd does not expect mathematical proofs for impossibility of quantum fault-tolerance (answer: neither do I). He is a theoretician much involved with experimental work. In reality, there is all sort of crazy noise (non Lindbladian, even non-completely-positive,) and one should pay attention also to 1/f noise. Overall, he disagrees with me.

Seth Lloyd: Are there experimentalists in the room? (Apparently not.) The lecture seems remote from what experimentalists care about. Lloyd does not expect mathematical proofs for impossibility of quantum fault-tolerance (answer: neither do I). He is a theoretician much involved with experimental work. In reality, there is all sort of crazy noise (non Lindbladian, even non-completely-positive,) and one should pay attention also to 1/f noise. Overall, he disagrees with me.

Bob Connelly: How sure are you in your conjectures? What is, from 0% to 100%, your level of confidence? (I did not know how to answer this question.)

Bob Connelly: How sure are you in your conjectures? What is, from 0% to 100%, your level of confidence? (I did not know how to answer this question.)

AM4: Nature does not understand ‘conjectures,’ it just does what it does.

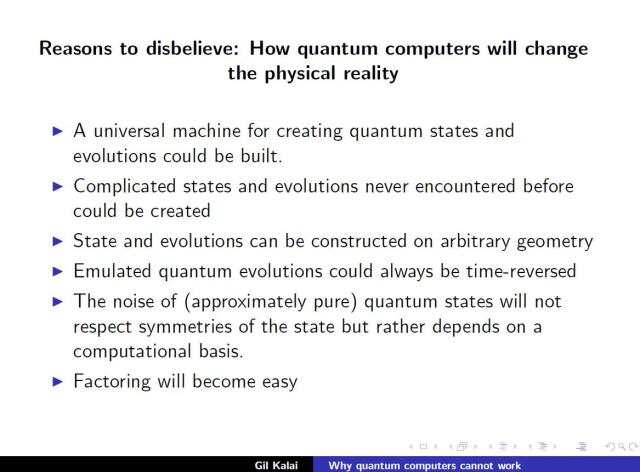

10) Reasons to disbelieve

(Slide 57; based on this post.)

Update (July 2013): The comment thread contains a long interesting discussion with Peter Shor, and Aram Harrow mainly on smoothed Lindblad evolutions, with contributions also by Klas Markström and John Sidles. I added also a couple remarks on further comments I heard while giving a similar lecture at HUJI, and from participants in QStart, and a comment with my 5-minute talk at the rump session in QStart.

These are excellent comments/questions from Harrow, Shor, Aaronson, Ruskai, Lloyd, Arkhipov, and Connelly! To paraphrase a celebrated speech by US President John Kennedy: “Such an assembly of quantum talent has not been previously been witnessed, with the possible exception of when Hugo Martin Tetrode dined alone!” (see also arXiv:1112.3748).

Thank you Gil, for inspiring everyone to think hard and well regarding these difficult quantum questions.

WIth respect to Aram Harrow’s questions regarding multiple-quantum NMR spin echoes, the appended two articles (out of Warren S. Warren’s group at Princeton) provide an overview of the main theoretical ideas and experimental evidence.

One-sentence summary: Magnetic resonance phenomena that are generically predicted by multiple-quantum coherence dynamics are known not to generically require multiple-quantum coherence dynamics.

—————

@article{Author = {Lee, S and Richter, W and Vathyam, S and Warren, WS}, Journal = {{J. Chem. Phys.}}, Number = {{3}}, Pages = {{874-900}}, Title = {{Quantum treatment of the effects of dipole-dipole interactions in liquid nuclear magnetic resonance}}, Volume = {{105}}, Year = {{1996}}}

@article{Author = {Zhang, H. and Lizitsa, N. and Bryant, R. G. and Warren, W. S.}, Journal = {J. Magn. Reson.}, Month = {Jan}, Number = {1}, Pages = {200–208}, Title = {{{E}xperimental characterization of intermolecular multiple-quantum coherence pumping efficiency in solution {N}{M}{R}}}, Volume = {148}, Year = {2001}}

Thanks, John! We do plan to come back to NMR as a potential counterexample to smoothing the noise in time idea, and also to various others concerns regarding the smoothed Lindblad evolutions.

A few more remarks, John. Indeed there were quite a few other very good questions and comments and one of my problem was that

I could not record many of them while concentrating on my talk. For example, I am not sure if the causality issue raised by AM3 is indeed the least action principle.

One more thing that I certainly need to return to is approximate cluster states, when the approximation is of the nature I expect them: mixture with undesired codewords.

It was left as an open question (I think it was the first open question of the debate)

if such states still support universal quantum computation and I do not know what the answer is.

There was something I was confused about for quite a while and a useful correspondence with Lucas Svec helped me to figure it out. Mathematically, the SLEs are a subclass of all Lindbladians. But my formula and the dependence on the past (and even on the future) looks utterly non-Markovian – so the question was how can my time-smoothing give a subclass of Lindblad evolutions.

I remember that this caused some confusion in the discussion over the blog and also, earlier, in some private discussions. At the end, this conflict of intuitions is based once more on what I refer to as the “trivial flaw”.

Of course, there is no need to stick to Lindbladian noise models when you apply my time-smoothing. (I like the term smoothed Lindblad evolutions because the initials SLE are identical to a famous (unrelated) class of stochastic evolutions introduced by Oded Schramm.)

A simple way to state the conjecture about time-smoothing is that in a realistic quantum system, applying my time-smoothing (for every time scale) gives a good approximation of the original system.

Gil, this “smoothing” program seems reasonable (to me). As for the physical mechanism of smoothing, perhaps that is simply what we call “field theory” …that is, a constraint that Nature forces upon all quantum systems, that they dynamically couple to a vacuum-state continuum. This is a quantum dynamical constraint to which Nature permits no exceptions; one wonders why?

Over on Shtetl Optimized, we see today that some of the energetic ideas that your talk “emitted” are being “absorbed” even by Hilbert-space acolyte Scott Aaronson, who thoughtfully remarks:

(emphasis as in the original).

Perhaps Nature is suggesting to us that the sobering conclusion of the previous decade “scalable quantum computing is far harder than we thought; perhaps even infeasible” has a wonderfully optimistic corollary “quantum simulation is far easier than we though; perhaps even in P.”

To apply a favorite Feynman phrase, that would be terrific!

GK: Unfortunately this good comment and the next one were swallowed by the system until I manage to salvage them many days later. Your comment on coupling to a vacuum-state continuum and connection to field theory is very interesting, John!

Thank you Gil … further remarks by you and Aram (and others) are eagerly anticipated. On Scott Aaronson’s weblog Shtetl Optimized I have posted a link to this “smoothed quantum evolution” discussion together some reasons why this topic (as it appears to me) has both practical and fundamental significance.

Pingback: Special Report: New Quantum Structure of the Universe #OccupyAtlanta #OTB | Censorship in America

One point. Alicki’s formula for smoothed Lindblad evolutions has a term K(t,s) in them which sets the time scale. Your slide didn’t have such a term, and without that term the formula you had on the slide made no sense to me; I don’t see how you can time-smooth on all possible time scales.

Dear Peter, thanks for the remark. My slides had the term rather than

rather than  so it was more restricted, and I talked about a fixed time interval [0,1]. Indeed, before your good comment in my lecture I did not give much thought to the time scale issue.

so it was more restricted, and I talked about a fixed time interval [0,1]. Indeed, before your good comment in my lecture I did not give much thought to the time scale issue.

As my initial reaction to your comment was, I think that for a realistic quantum system described in terms of a Lindblad evolution it is the case that for every time interval (which presecrbes the time scale,) the system can be well-approximated by a smoothed Lindblad evolution. (Both the smoothing and the notion of approximation will depend on the time interval and hence on the time-scale). Less ambitously, we can replace “realistic quantum system” by “a quantum systems that ‘do not invoke’ quantum fault-tolerance.” (As I wrote in a comment above it is not necessary to insist that the noise term is Lindblandian to start with.)

Another way to think about it is as follows. You give me a description of a quantum system that you want to realize in a certain time interval. (It is up to you to make sure that your description is based on “first principles” or that it obeys any other requirement that you regard as important.) I perform a smoothing-in-time on your system and check if the resulting quantum system well-approximates the original one. If the answer is yes I declare your system kosher. And if the answer is no I declare your system non-kosher.

Regarding time-scales and noise, it is also an interesting issue that I try to address how to bound from below the rate of noise for general quantum systems.

But doesn’t the smoothing have to take place along some time scale. If you blur a picture, you can’t blur it on all scales … blurring the picture on the largest scale completely destroys the pixels on the smallest scales, and blurring the picture on the smaller scales leaves the image intact on the largest scales. So how can you smooth a Lindblad evolution on all scales?

Peter, The smoothing is done with respect to a single time interval and thus with respect to a single time scale. (Or, more precisely, one time-scale at a time.) The conjecture is that the quantum system is well approximated by a smoothed one, or even by its own smoothing. If you shift your attention to a smaller time interval then you have to adjust both your smoothing kernel and your notion of approximation. (But the conjecture should still hold.)

Actually your example about blurring pictures is very good. Suppose that you conjecture that if you blur a “natural” photo it well approximates the original. You can consider this conjecture for this picture (lets call it picture 1), and then the blurring is done w.r.t. length-scale of that picture. You can also consider this picture (picture 2) and then the blurring is w.r.t. the length scale of picture 2. Actually picture 1 is a tiny window inside picture 2 and indeed when you blur picture 2 the details of the little window of picture 1 may disappear, but this will be taken into account by your approxmation-measure. (See this post where these pictures come from; it would be great to take some similar pictures with you 🙂 soon.)

In this case, pictures like this one or this one will probably fail the blurring condition. The mathematical property of “blurring is a good approximation” makes an interesting distinction that our vision and image processing friends are surely aware of.

I am quite interested in studying quantum processes where my smoothing is a good approximation. One other thought invoked by your comment about scales is that fractalish behavior is a multi-scale property.

My question is then: how does the quantum system know whether it is doing a quantum computation on time scale t1 or on time scale t2?

Dear Peter, consider a quantum system running on a huge Hilbert space for a long time. We choose a window: a small Hilbert subspace and a small interval of time. The conjecture is that for this window the smoothed evolution will be a good approximation for the evolution without smoothing. Of course, the system “does not know” our choice of the window.

So let me see if I have it straight. I don’t believe that Nature can actually follow the equation for smoothed Lindblad evolution that you wrote down, because the K(t-s) term would have to depend on the time window that you’re looking at. But you think there is some similar kind of smoothing which happens on multiple time scales, so that whatever time scale you look at, you lose enough resolution to foil quantum computation but not enough resolution that it would have been distinguishable by experiment from the predictions of un-noisy quantum mechanics.

” I don’t believe that Nature can actually follow the equation for smoothed Lindblad evolution that you wrote down, because the K(t-s) term would have to depend on the time window that you’re looking at. But you think there is some similar kind of smoothing which happens on multiple time scales,”

This is an excellent point that need to be clarified. I also do not believe that any smoothing is “happening.” The smoothing is not a physical process that mixes at present the noise from the past and from the future. The smoothing is a mathematical tool to describe the systematic connection between the quantum system, the device that realizes the quantum system, and the noise model. This is related to what I referred to in my talk as the “trivial flaw”. We do not have a single device capable of doing everything but we may need special devices for different tasks.

The way nature can follow my smoothing equation is this: The systematic relation between evolution-device-noise excludes those quantum systems which violate it. Again, no actual “smoothing” is happening at any scale.

So what kind of physical process actually is going on? Or do you not have any candidates?

“So what kind of physical process actually is going on? Or do you not have any candidates?”

This is again a wonderful question and here is the way I see it. Noisy quantum computers represent a level of abstraction which is not detailed enough to describe the kind of physical process that is actually going on. For that you need a much more specific physical scenario and a much more detailed modeling. But here comes the beautiful part: this high level of abstraction is nevertheless suitable to describe what actually is not going on. And what is not going on is quantum fault-tolerance.

(Since you started quantum fault-tolerance, this is not such a bad deal 🙂 )

The smoothing is offered as a formal way of saying that quantum fault-tolerance is not going on. In the debate with Aram, and my papers, I offered a few thoughts regarding the kind of physical processes which are actually going on in a few very specific implementations of quantum computation.

So your argument, boiled down, is that there is some kind of noise that interferes with quantum computation. This noise vaguely resembles another kind of noise, but it actually isn’t that noise and doesn’t behave exactly like that noise (e.g., it acts on every timescale, in particular, which smoothed Lindblad evolution doesn’t). We don’t know what is causing this kind of noise. And this kind of noise pops up out of nowhere any time you try to do a quantum computation and ruins it, but it doesn’t ruin similar phenomena which aren’t quantum computations (like the fractional quantum Hall effect).

At this point, I find this theory too vague to even try to refute.

That is a good question, whose implications (as I appreciate them) direct us toward geometric smoothing (a.k.a. “California” pullback) as more natural than temporal smoothing.

In consequence of an episode in mathematico-literary history that is recounted on Schroedinger’s Rat, perhaps Lindblad-smoothing-by-pullback might be called pieuvre dynamics, in token of the inexorably entangling tentacles of polynomial-rank product-state (Segre/secant) manifolds?

Pingback: Mittag-Leffler Institute and Yale, Winter 2005; Test your intuition: Who Played the Piano? | Combinatorics and more

Dear Peter, thank you for your very clear summary of your point of view. My own, different, take on the matter is given in my earlier comments above (and in my lecture). I do plan to try to think about and relate specifically to some of the points you have raised.

Dear Gil, if you can find a way to make your noise model more detailed, I’ll be happy to look at it again.

Peter, one detail I certainly agree that I need to supply is the formal meaning of “approximate” in the conjecture that realistic quantum systems are well approximated by smoothed Lindblad evolutions. Beth asked about it, I completely agree that this is crucial, and I think that filling this missing piece is doable. However, it looks to me that when you say “more detailed” you mean much more that that, right?

Some kind of formula would work. I realize you have a formula for smoothed Lindblad evolution, but it depends on the timescale, and I am not buying a noise formula which depends on the timescale because Nature doesn’t know how long the quantum computation will take in advance. In fact, I suspect that it is impossible for a noise model to behave the way you want it to behave (smoothing it at every timescale, but never blurring it completely). If you can show that there is a noise model which gives multiscale smoothing, that would be great.

Dear Peter,

Briefly, I think that the main difference between our points of view is that you expect me to provide an explicit description for the class of noisy evolutions that I regard realistic (e.g. the class of smoothed Lindblad evolutions) . I am satisfied (and I think you should be satisfied too) with a definition of such a class by an implicit condition like the following: a Lindblad evolution is realistic only if it is well-approximated by a smoothed Lindblad evolution. (Or stronger, by its own “smoothing-in-time.”) I do not think that your interesting critique regarding time-scales applies here. Given a time scale you can dismiss a large number of evolutions as unrealistic, but you do not need to apply the smoothing itself on every time-scale.

Gil: I am amazed that you don’t think the time scale critique is applicable. Suppose we have a system, and when we first look at the evolution of the system from time 0 to time 1000, and we then zoom in and look at it from time 0 to time 1, we find that these two evolutions are incompatible. Are you saying that you don’t find anything wrong with that?

I’m not saying that smoothed Lindblad evolution on multiple timescales is impossible. But my intuition says that it shouldn’t work. And if it doesn’t, I am not satisfied.

Peter, the crucial point is that I refer to approximation. Think about the notion of “well-approximated” as expressing, in the case of noisy quantum computer, an expected gap, along the evolution (between the state of the system and its approximation), of qubits where

qubits where  , say. It is possible for a quantum evolution to be well approximated by a smoothd Lindblad evolution for every time scale. The conjecture is that every realistic quantum evolution satisfies this property. The property of being well-approximated by SLE for every time scale do satisfy your compatibility requirement!

, say. It is possible for a quantum evolution to be well approximated by a smoothd Lindblad evolution for every time scale. The conjecture is that every realistic quantum evolution satisfies this property. The property of being well-approximated by SLE for every time scale do satisfy your compatibility requirement!

Gil, perhaps Peter’s concern is that this might be like that class of functions which has the “stronger-than-Lipschitz” property, i.e. for

for  .

.

You can prove all sorts of great things about such functions, but then you run into trouble when you try to find an example.

Dear Aram,

My conjecture is:

(*) A quantum system described by a Lindblad evolution is realistic only if it is well-approximated by a smoothed Lindblad evolution (for every time-window).

A somewhat stronger version is

(**) A quantum system is realistic only if it is well-approximated by its own smoothing-in-time. (Again, for every time window).

My smoothing operation depends on the time-window and hence on the time-scale. But the classes of quantum systems described by (*) or (**) do satisfy the time-scale compatibility requirement of Peter. You do not need to create a multi-scale version of smoothed Lindblad evolutions for this purpose. There is more to be said on the issue of multiple scales and noisy quantum evolutions, but indeed perhaps it will be useful for me to understand what precisely are Peter’s concerns at this time, and if Peter finds my last comment (that I largely repeat here) as a satisfactory answer to his earlier comment starting with “Gil I am amazed that… .”.

(Regarding your comment, Aram. If you refer to conditions (*) or (**) then they pose severe restrictions on noisy quantum systems but leave alive plenty of quantum systems. If you refer to a hypothetical multi-scale version of SLE that Peter talked about, then, as I explained, this is not needed.)

Gil,

What I am worried about is that you require that the evolution of the system from time 0 to 1000 be smoothed on a time scale of 1000. But my intuition tells me that any valid evolution of a system from time 0 to 1 should be the start of some (in fact, of many) valid evolutions of the system from time 0 to 1000. Can you show that this is true of smoothed Lindblad evolution, given that you are requiring that the evolution of a system from time 0 to 1 only be smoothed on a time scale of 1? Or do you disagree with my intuition?

Hi Peter,

“What I am worried about is that you require that the evolution of the system from time 0 to 1000 be smoothed on a time scale of 1000.”

Approximately smoothed

“But my intuition tells me that any valid evolution of a system from time 0 to 1 should be the start of some (in fact, of many) valid evolutions of the system from time 0 to 1000.”

Peter, we can come back to this intuition, but let’s take it for granted that you can add the identity as the unitary part (with your favorite description for the noise) from time 1 to time 1000. (Or does your intuition goes further than that?)

“Can you show that this is true of smoothed Lindblad evolution, given that you are requiring that the evolution of a system from time 0 to 1 only be smoothed on a time scale of 1?”

If you take

anyan evolution on time [0,1], which is approximately smoothed on a time scale 1, and add to it the identity as your unitary part from time 1 to time 1000, then the system from time 0 to 1000 will also be approximately smoothed.(I think that the issues of time scales as well as your intuition from the last comment are interesting and deserve further discussion, but let me try first to clarify the more technical matters. )

Pingback: Special Report: New Quantum Structure of the Universe #OccupyAtlanta #OTB | Michael Florin

Hi Gil,

Can you explain how your statement works:

If you take any an evolution on time [0,1], which is approximately smoothed on a time scale 1, and add to it the identity as your unitary part from time 1 to time 1000, then the system from time 0 to 1000 will also be approximately smoothed.

To get the smoothing on a time scale of 1000 from time 0 to 1, don’t you have to convolute the noise operator E_s with K(s,t). Isn’t that going to give you a \tilde{E}_s which is different from your original E_s?

Dear Peter, my intuition is the following: If the unitary part of the evolution is the identity, then smoothing will make no difference as the system before smoothing is identical to the system after smoothing. If the unitary part of the evolution is the identity for the last 99.9% of the time, then smoothing should make a little difference since for most times the noise term after smoothing is very close to the noise term before smoothing. (The precise notion of distance between two Lindblad evolutions should be chosen to make this intution works, but it looks quite reasonable.)

If \tilde{E}_s is different but very close on average to my original E_s, then I regard the original E_s “approximately smoothed”.

For that to be correct, wouldn’t E have to be the identity whenever the unitary part of the evolution is also the identity. In other words, isn’t this assuming perfect quantum memory? If E_s isn’t the identity when the unitary part of the evolution is the identity, then won’t the noise the system accumulates between times 1 and 1000 when it is undergoing no evolution have to be transformed back through U_s,t and become some very entangled noise on the system between times 0 and 1? Or am I misunderstanding your smoothed Lindblad evolution equation?

Yes, the situation in time [0,1] can be hairy. But I take the average of the distance between \tilde{E}_s and E_s over all times between 0 and 1000. Whatever happens between time 0 and 1 can make only a very small difference. (In fact, I think that you do not even need to assume that the evolution is approximately smoothed in the interval [0,1].)

GIl,

Then make it between 1 and 10, or 1 and 2, and not between 1 and 1000.

Peter, I don’t think your revised argument is harmful to what I am saying.

The ball park for realistic quantum evolutions are those evolutions which are well approximated (for every time window) by smoothed evolutions. The precise dependence of the approximation on the kernel used for smoothing (or just on how concentrated the kernel is) can be a factor in telling how hard it is to realize a specific noisy evolution. And somewhere in this ball park will be the border between what we can achieve and what we cannot achieve. (Quantum algorithms involving quantum fault tolerance are, I think, way out of this ball-park.)

Now, you start with a realistic noisy evolution which runs in the time interval [0,1] and at the end create a certain quantum state. Your intuition is that if we want to maintain the end-state for the time interval [1,2] or even [1,10] this new task is still in the realistic ball park. This seems correct, but since the new task is harder, the precise kernel we should take may be different.

(With your [0,1]- [1,1000] earlier version there was a possibility that something that is only somewhat harder gets asymptotically out of my ball-park altogether. But it turned out that this is not the case.)

Hi Gil,

I guess I don’t really understand your SLE theory. Maybe you could illustrate what happens on a simple example. Take a qubit. Put it in state |0⟩. Now subject it to noise with a weak depolarizing component and a strong dephasing component. Wait long enough until it is only slightly depolarized and very dephased. Rotate it by a small angle θ. Wait for the same amount of time. Then measure it.

I know what quantum mechanics predicts for the outcome. What does your theory predict will happen. If it is the same as quantum mechanics, why?

Peter,

I too have been trying to understand Gill’s conjectures so I’ll take the liberty to say what I think Gill would reply here, and then Gill can tell me if I have understood him or not.

I believe that Gill claims that it is not possible to build a universal quantum computer and instead one will have to build a physical machine for the specific purpose of performing the computation in your example. This machine will not only define the computational process but it’s physical properties will, in a self-referential way, also determine the structure of the noise and because of this the noise will have properties that ruin any quantum error correcting codes one wishes to use.

If this is the case then it is impossible to separate the noise on computational process from it’s physical implementation, so this takes the claim outside mathematics and makes it a “physics conjecture” instead.

Does this agree with what you are saying Gill?

Hi Klas,

This may describe Gil’s philosophy in general, but I’m looking for something much more specific: the application of the SLE conjecture to this problem. It seems to me that there are one of three possibilities. (a) Gil predicts that the evolution of this system is not that predicted by standard quantum mechanics. (b) Gil can show that there is a smoothed Lindblad operator which approximates the evolution of this system. (c) I completely misunderstand what Gil is saying.

Dear Peter and Klas,

1) Indeed Klas’s comment is a good description on my point of view. Thanks, Klas.

2) Peter’s comment is a good opportunity to clarify a few things.

Peter: “I know what quantum mechanics predicts for the outcome. What does your theory predict will happen. If it is the same as quantum mechanics, why?”

It goes without saying that for every quantum system my predictions are precisely as those as quantum mechanics. The SLE is a proposed tool for telling if a quantum system is realistic, or more neutrally, if a quantum system enact quantum fault-tolerance. (Thus, my smoothed quantum systems form a subclass of general quantum systems, and I think that they can give a good description of realistic noisy quantum evolutions.)

In cases that the quantum system is not fully specified e.g. when only the unitary part is described or only a pure target state is specified then my conjectures indeed give predictions about how a realistic quantum system meeting these partial specifications will behave. For example, one of the conjectures refers to how noisy approximations of codewords in a quantum code will behave.

3) Peter: “It seems to me that there are one of three possibilities. (a) Gil predicts that the evolution of this system is not that predicted by standard quantum mechanics. (b) [Gil can show that] there is a smoothed Lindblad operator which approximates the evolution of this system. (c) I completely misunderstand what Gil is saying.”

It looks completely obvious to me that (b) applies here. (Since this does not seem to be obvious to Peter maybe there is also some misunderstanding left.)

More generally, if you consider all quantum systems with an absolute bound D on their depth then I think that you can find a fixed Kernel for which the SLE will be a good approximation. You can make sure that most of the mass of K(x) is in a window of length (ε/D).

4) About time scales. I think that we clarified the specific concerns regarding time-scaling and smoothing but there are interesting issues left to be discussed regarding time-scales and realistic noisy quantum evolutions.

Okay, if (b) is the case, what is the smoothed Lindblad operator which approximates the evolution of the system.

Just the smoothing of the system as you described…isn’t it?

Hi Gil,

If you have two sources of dephasing noise at an angle θ, they generate depolarizing noise which (for ε large enough) gives a different physical outcome than pure dephasing noise. Here, ε needs to depend on the intensity of the dephasing noise, so fast dephasing drives the possible ε you can use towards 0.

Let’s try to figure out our differences for this nice example. The unitary evolution consists of a single gate which rotates the qubit at angle θ. We consider two equal time intervals before and after the rotation. We start with a certain noise at each time E_t and then apply smoothing with respect to a parameter ε. I claim that the average distance between \tilde{E}_s and E_s is small when ε is. (Uniformly for all choices of the starting noise E_t’s) You claim that depending on the nature of the noise itself we may need to take ε to zero. Is this a correct description of our disagreement on this example?

There’s a time constant associated with dephasing noise, I think that the parameter ε has to scale proportionately to the time constant. But lets take the time constant to be roughly the length of a single gate. You can then think of this computation as applying n/2 identity gates, one gate which rotates by an angle θ, and then another n/2 identity gates. I think that you are not going to get reasonable results unless you only smooth over a constant number of gates, and not over εn gates.

In my smoothing ε is always proportional to the time interval. If you wish to consider the time interval as divided to n/2 identity gates then the rotation and then additional n/2 identity gates (I dont understand why to look at it this way) then the smooting scale in terms of number of gates will be indeed εn. (Still it looks to me that on average \tilde{E}_s and E_s will be very close.)

Hi Gil,

Maybe I made my comment too quickly. What is ε? I didn’t realize that your conjecture allowed you to choose ε. If you are allowed to choose ε arbitrarily, then isn’t your SLE conjecture trivially true? If you’re not allowed to choose ε arbitrarily, what is the reason that you think that your conjecture works for ε = 1/1000, but not for ε = 1/3?

Furthermore, if you keep making ε small enough to allow for all physics experiments done to date, aren’t we chasing a moving target?

Hi Peter, There are two parameters which we need to choose (or to relate) one is how well (on average) \tilde{E}_s approximates E_s and the other is what is the kernel and here perhaps we can think about the kernel as a truncated Gaussian and just refer to its variance. I think that for quantum processes with quantum fault tolerance, \tilde E_s and E_s will be utterly unrelated even when ε is tiny. I would expect E_s and \tilde E_s to have good correlation for realistic cases (like the one you suggested) even for pretty large ε, say ε=1/3.

It seems that “morally” the SLE conjecture is closely related to quantum computing having bounded depth (sort of the early Unruhian approach). Of course, one may argue that if this depth D is a million then this not only accounts for all physics experiments done to date but may also allow some interesting fault tolerance computing. (This is the moving target flavour of any asymptotic claim.) Actually I expect that once you set your realistic ball park in terms of these SLE or the depth D the border line will be more in the neighborhood of D=3 rather than a million.

Hi GIl,

I don’t see how you can make this work by choosing ε small enough.

What keeps me from making a “quantum computation” which has two parts: one is my little experiment above, repeated every time step (maybe many copies repeated each time step). To do this, all you should need is to get a quantum computer to remember classical information, and if smoothed Lindblad evolution can’t remember classical information, there is something really wrong with it (since actual computers can). The other part is a true quantum computation that factors a large number. Maybe you could show that if ε is small enough that most of the time my little experiment works as it should, then ε is small enough for the actual quantum computation to work.

Hi Gil:

I think I can go back to the heart of my problem with smoothed Lindblad evolution. This was actually the intuition behind my first objection, but it’s much better clarified in my mind now. Suppose I apply some quantum gates to a quantum system. If we view the computation as taking place between times 0 to 1, you smooth using one kernel. If we view the computation as taking place between times −10 to 10, the kernel gets scaled, and we effectively have to smooth using a different kernel.

How does nature know which kernel to use???

You’ve said that it doesn’t matter, because the computation has to be smoothed at all time scales. However, if we use a kernel with too small an ε, quantum computing works perfectly, and if we use a kernel with too large an ε, we can’t match the actual predictions of standard quantum mechanics. So the time scale really does matter: we need to choose the correct ε.

My belief is that smoothed Lindblad evolution cannot work unless the smoothing mechanism somehow depends on some property of the system which tells whether a real quantum computation is or is not taking place.

One small thing about your earlier discusison: I am a little confused about epsilon. Is this the window over which we smooth? If so, what are the units? I would imagine that the units would be in terms of gate speed, so a width-10 window would mean that we average over ~10 gates. But you guys are talking about things like epsilon = 1/3.

Gil, I think Peter’s example is a nice illustration of the point that the behavior of a system depends on how many identity gates we apply. This seems not very plausible.

There is also a classical version of Peter’s argument. Suppose that we have two bits, A and B. A is noisy, and B is not. So there is a noise process that flips A according to a Poisson process with time scale 1 second. B gets flipped rarely, let’s say once a day. (Such a separation of timescales in interacting systems is physically common, e.g. if A is a nuclear spin and B is an electron spin.)

Let’s say the smoothing timescale is 1 second. Then it’s basically irrelevant.

Let’s say it’s 100 seconds. Suppose we do a single swap gate between A and B. It is physically reasonable that for that moment, B, having briefly stuck its hands in the oven, would be subject to higher rates of noise. But smoothing would say that for the next 100 seconds, B experiences noise at a rate of ~1/second. I think most people would consider this an example of a mistaken theory. Worse are the following two additional features of SLE:

1. According to the principle that the smoothing kernel is symmetric in time, B should also experience higher rates of noise for 100 seconds BEFORE the swap gate.

2. The claim is that the noise is described equally well by smoothing at timescales of 1 second, 10 seconds, 100 seconds, 1000 seconds, etc., which even in this extremely simple case I can’t even figure out a mathematically consistent way to describe.

Of course for smoothing to be interesting, it should generate different predictions than “no smoothing” would. But the above scenario, like the one that Peter described, the predictions do not appear to resemble the world we live in.

A small correction: In my example, I had the nuclear and electron spins backwards. The electron spin should be A, and the nuclear spin should be B.

Peter’s two most recent comments (A, B) raised excellent issues and so are some of the “follow-up” points by Aram. So I will be happy to think about them and discuss them further. There are still three minor points which may represent some misunderstanding that are better clarified first.

1) Aram: “One small thing about your earlier discussion: I am a little confused about epsilon. Is this the window over which we smooth? If so, what are the units? I would imagine that the units would be in terms of gate speed, so a width-10 window would mean that we average over ~10 gates. But you guys are talking about things like epsilon = 1/3.”

Aram, We fix a time interval and the smoothing parameter ε is always given in terms of the length of this interval. (In my setting there is no reference to “gates” and I do not see a reason to talk about gates in this context.)

2) Aram: “The claim is that the noise is described equally well by smoothing at timescales of 1 second, 10 seconds, 100 seconds, 1000 seconds, etc., which even in this extremely simple case I can’t even figure out a mathematically consistent way to describe.”

This is not the claim. The claim is that for every time interval the system is described well (in this time interval) by a smoothed system. Of course, there is no claim that if you fix a time interval then smoothing in different time scales are similar. (See e.g. this comment.)

So if you consider a 5 second window then a 1 second smoothing will give you a good picture, and if you consider a 50 second window then a 10 second smoothing will give you a good picture, and if you consider a 500 second window then a 100 second smoothing will give you a good picture, and if you consider a 5000 second window then a 1000 second smoothing will give you a good picture.

3) Peter considered a scenario where we have a quantum system in the time interval [0,1] subject to a certain noise, and to a single (non-trivial) gate of rotation at time 1/2. (we discussed it back and force ending with my last comment here.) I still think that for this example the ε-smoothing will be a good approximation. (Remember, the quality of the approximation is given in terms of how far and

and  are on average. )

are on average. )

Gil: I am confused about this dependence on the window size that we consider. What if 100 people are observing the same system, and each considers a different window size? Similarly for what you said about “fix a time interval” and then have the smoothing window be a fraction of that time interval.

Here is the picture that I have of a classical system (or a quantum system that is periodically measured). I think of it like a a videotape. We can have a 30-frame-per-second record of what is going on and we can review as much or as little of it a we like. It is a function of time, defined as long as the system exists. t is time, and f(t) is the state of the system. It is not f([t_1, t_2]); i.e. not a function of an interval. I don’t know how to make something a function of an interval, other than in a trivial way, i.e. f([t_1, t_2]) is the restriction of f to the domain [t_1, t_2]. But in that case, it is not mathematically well-defined for the amount of smoothing to depend on the length of the interval. More precisely, if I have intervals [t_1, t_2] and [t_3, t_4] which overlap, then f([t_1, t_2]) and f([t_3, t_4]) should be consistent on their intersection.

I guess your answer will be that a single time-evolution will simultaneously appear smoothed on all time scales. This seems to me contradicted by Peter’s example, or my example, or indeed any nontrivial example.

Aram, Peter’s example was the following: you have a time interval [-T,T], noise E_t=E for t in the interval (which was specified but it does not matter), and at time 0 you apply a single unitary U.

When you apply smoothing at time scale εT then when t is not in [- 3εT,3εT] (say), \tilde{E}_s and E_s will be very close together and therefore also on average over T \tilde{E}_s and E_s will be quite close. This is true uniformly when you consider all other time interval [-S_1,S_2] and apply the smoothing proportional to the length of the interval.

As a side note, I don’t think that the right distance measure is whether E_s and tilde{E}_s are close “on average”. The right distance measure is something like the variational distance on the transcript. In both Peter’s example and mine (and indeed for any nontrivial example), the transcripts will look very different, and this will be observable.

But what you wrote about Peter’s example already contains the idea of “smoothing with time scale epsilon T”. The point is that observable quantities depend on this timescale T. But where does T come from? At noon (=time 0), I apply the gate U. At 12:01pm I look at the system. At 12:05pm I look at the system. At 1:30pm I look at the system. This is what experiments look like. There is no single “interval” [-T, T] relative to which the physics is defined.

The purpose of the time smoothing is to make a mathematical distinction between quantum systems that involve quantum fault-tolerance and those that do not. The (mathematical) test is that for every time interval [a,b] when we smooth using a scale proportional to the length of the interval (so the smoothing parameter is ε(a-b)), then the smoothed system is close to the original system in the measure that I specified. So by my criterion Peter’s example pass the test of “not enacting quantum fault-tolerance” which is intuitively very reasonable.

Based on this test, I propose to consider the smoothed Lindblad evolutions themselves as useful models for approximatly-unitary evolutions. (I referred to those as “approximately-pure” in the lecture (and earlier), but Mary-Beth pointed out that this was an ackward terminology.) I agree with some of the points that Peter made regarding this and I will comment on it separately.

I don’t understand the idea of distinguishing between systems that implement FT and those that don’t. Could you distinguish between an algorithm that uses quicksort and one that doesn’t? I think the answer to both is clearly “yes, in some cases, but certainly not in all cases.”

But also I don’t understand the status of SLE. The consensus picture of quantum noise is that there is a Hamiltonian with terms for the system, the environment, and then terms coupling them. Tracing out the environment gives us some effective noisy evolution on the system. Assuming the environment is rapidly mixing yields the Lindblad formalism. Is the content of the SLE conjecture that some part of that picture is wrong? Or should be modified? Under what conditions? “When implementing FTQC” is not really a mathematically well-defined condition. Nor is “when considering an interval of length T”.

I understand that you want to look at the distance measure which measures the average distance been Lindblad operators when averaged over a time T. However, I am not interested in that measure. I am interested in physically observable differences, such as (in my example) the rate at which the bit B flips, or (in Peter’s example) the direction of the spin at the end of the experiment. If such observable differences depend on an ill-defined parameter, such as “the length of the interval that we consider” then I view the theory as ill-defined.

Hi Arram,

“I don’t understand the idea of distinguishing between systems that implement FT and those that don’t. ”

My impression is that Gil does not believe that any computational model which allows fast factoring of integers is realistic, see slide 57 or point 10 above. Since FT allows us to do this he want to find a mechanism which invalidates FT in realistic systems. So in part this is reasoning going backwards from the process he wants to exclude, ie factoring, in order to find some general principle which will exclude it.

But since there is no direct derivation of this from the physics of the system, only the general idea for an effective theory in terms of SLE, the conjectures makes very few concrete numerical predictions which one could use to refute them.

“Tracing out the environment gives us some effective noisy evolution on the system. ”

As I have understood Gil the idea is that the environment can’t be traced out in a way which leaves a simple noise model. He claims that the physical machine which implements the quantum computation will contribute in an essential and self-defeating way to the noise, so the noise will be unique for every computational process and physical implementation of it.

Too me the arguments in favor of the conjecture look much too vague, I would like to see a complete derivation in even some simple concrete situation first, but of course it would be even nicer to get a mathematical argument which shows that the effective SLE theory can be ruled out.

Aram, I propose a formal distinction between realistic (approximately unitary) quantum systems and non-realistic such quantum systems. A system that for some time scale cannot be approximated by smooth-in-time system (for smoothing in the same time scale) is not realistic. And I specify the notion of approximation. So you can regard this proposal like my old conjecture C. If you can exhibit a counterexample this will kill this proposal, like your example with Steve killed my old Conjecture C.

Peter’s example is interesting, but it is not a counterexample to my proposal. Your comment on measuring the distance is interesting, but, again, not directly relevant. My proposal is the main technical issue of this thread, and I think that we have an interesting discussion and I will certainly be happy to see ideas for a counterexample.

Indeed there are also interesting related conceptual issues.

“The consensus picture of quantum noise is that there is a Hamiltonian with terms for the system, the environment, and then terms coupling them. Tracing out the environment gives us some effective noisy evolution on the system. ”

This is an excellent point. What is missing from your description is the device realizing the system. The formal description of the environment can be a function of the device and thus can systematically depend on the evolution. In my talk I referred to ignoring this issue as the “trivial flaw”. (See also Klas’ two comments above.)

“I don’t understand the idea of distinguishing between systems that implement FT and those that don’t. Could you distinguish between an algorithm that uses quicksort and one that doesn’t?”

Understanding when an algorithmic task requires SORTING can be a very important issue and is crucial in understanding when we can hope for linear-time (or very-nearly linear time) algorithms. Let me remind you, Aram, the analogy you made right at the beginning of your argument in our debate between fault-tolerance quantum computing and 3-SAT. It is certainly a central issue to understand which algorithmic tasks require 3-SAT. I think that understanding quantum systems that embody quantum fault tolerance is important, but, In any case my distinction was the motivation for my proposition regarding smoothed evolutions and you don’t need to buy it.

(A minor point regarding Klas’ comment. For me the other reasons to doubt QC, and, in particular, the fact that QCs represent entirely new physical reality are stronger than factoring. Regardless of what you believe about factoring you will certainly check very very carefully and skeptically any specific attempt/approach for a factoring algorithm.)

I really think my example shows that even the simplest systems cannot follow Gil’s idea of smoothed Lindblad evolution hypothesis while still matching the standard predictions of quantum mechanics. The executive summary is that if you simultaneously apply dephasing noise at angle 0 and dephasing noise at a small angle θ, they combine to produce depolarizing noise. Thus, a rotation by angle θ in a dephasing environment produces substantial depolarizing noise, while rotating by angle θ with separate dephasing noise before and after this rotation produces only a small amount of depolarization.

I don’t think this is something I can explain in the comments of this blog, as I don’t know any easy way to write equations in it, but we can certainly discuss this via other channels. Gil has asserted in these comments that he doesn’t believe that this is true, but my impression is that he hasn’t worked out the equations. If he does work out the equations and finds in error in my analysis, I would really like to hear about it.

Peter and Aram, my impression is that the discrepancy regarding Peter’s example is that you do not take my definition for the distance between two systems but some different notions. To clear the difference, it may be useful to look at an example which is indeed approximately unitary which is the original context for my SLE. (Indeed, I did not look carefully at the specific noise Peter described since what I said did not depend on the identity of .)

.)

One can write latex formulas here by puting a dollar sign followed by the word “latex” before the formula and another dollar sign after the formula. E.g. but I can pose here whatever Peter will transfer me in any channel (and fix any attempt to pose formulas directly).

but I can pose here whatever Peter will transfer me in any channel (and fix any attempt to pose formulas directly).

I can’t speak for Peter, but I imagine that he is using the same notion of distance as me, which is variational distance in the transcript of measurement outcomes. Or even before that, the notion that “the results look very different and this is easy to detect with measurement(s).”

If there are N time steps for N large, and in one evolution, the identity operation happens each time step, and in the other, there is a 1/sqrt(N) chance of a bit flip in each time step, then the two evolutions will be close by the “average distance per time step” measure, but far in the more natural measures I describe above. Note that another flaw in the “average distance per time step” measure is that it is not invariant under rescaling time.

But the distance measure is a bit of a red herring. Peter and I have described consequences of SLE that just look like wacky unrealistic physics, and that are contradicted by the most basic experiments in any quantum system that we can manipulate coherently: nuclear spins, photon polarization, electron spins, atomic orbitals, you name it.

You mentioned that there was an example where SLE seems natural. What is it?

Indeed, my example was constructed so as to have a large variational distance in the outcomes of measurements at the end of the evolution.

If the evolution of two systems is “close” in some sense, but evolution 1 gives results which correspond to experiments, and evolution 2 gives results which have are at variance with experiments, I don’t see what good knowing that these evolutions are “close” does for you.

Peter wrote: “My belief is that smoothed Lindblad evolution cannot work unless the smoothing mechanism somehow depends on some property of the system which tells whether a real quantum computation is or is not taking place.”

Let me say briefly that I overall agree with this statement!

While you may agree with this statement in principle, your comments certainly seem to indicate you disagree with it in practice .

It’s funny that you and Klas regard my statements as saying something deep/excellent. Rather I am trying to say that the consensus view is that “there is some known physics that generates dynamics as a function of time,” then I describe that in terms of the most general/trivial/uncontroversial theories.

I would like to know how SLE compares with this consensus view. Does it generate predictions that deviate from known physics? Every time I try to analyze it for the simplest examples it gives predictions that seem to be nonsense. Exhibit A: my example. Exhibit B: Peter’s example. (You ask for a counterexample. Here are two. For a third, take any example where QM+noise yields any concrete prediction.) Where is the example where it seems to make sense?

“It’s funny that you and Klas regard my statements as saying something deep/excellent.”

Your question was excellent, Aram, since it demonstrated a deep misunderstanding that needs to be clarified. Let me try again

Aram: “But also I don’t understand the status of SLE. The consensus picture of quantum noise is that there is a Hamiltonian with terms for the system, the environment, and then terms coupling them. Tracing out the environment gives us some effective noisy evolution on the system. Assuming the environment is rapidly mixing yields the Lindblad formalism.

Is the content of the SLE conjecture that some part of that picture is wrong?”

No! Of course, not. As is very clear from the slides of my talk (and the formulas), including the few slides outlined in the post itself, the smoothed Lindblad evolutions form a special case of general Lindblad evolutions. (See also the reference in the presentation to the question of Lucas Svec.)

So how can you ask this question, Aram, when the answer is so obvious from the mathematics?

Gil,

I don’t think that Aram is disagreeing about smoothed Lindblad evolution being a special case of general Lindblad evolution. He’s talking about how you came up with the equations for smoothed Lindblad evolution.

In general, physicists don’t pick the Lindblad equation for a system out of thin air. They start with a Hamiltonian with terms for both the system interacting with itself and the system interacting with the environment. They then make the assumption that the environment is rapidly mixing (or at least, that the behavior of the system can be well approximated by ignoring the terms giving the environment’s interaction with itself, and replacing them by the assumption that the environment is rapidly mixing). They then trace out the environment.

Either that, or they do some experiments and figure out how to best fit the behavior of the system by a Lindblad equation.

I guess I asked the question because I am trying to use the idea of SLE to obtain predictions about what a system does. It seems to me that there are (at least) two possible ways to think about it:

1. SLE is a recipe for producing smoothed Lindblad evolutions. Start with some physically plausible Lindblad evolutions (like single-qubit decoherence) and then smooth according to some kernel.

2. SLE is an emergent property of (some) highly interacting Hamiltonians, just as Lindbladians are an emergent property of Hamiltonians in which the environment satisfies a Markovian condition. Thus to obtain the time evolution we need to start with some Hamiltonian (for which the conditions are unknown), and then come up with some kind of derivation that will show that SLE emerges as an effective theory.

3. something else entirely??

Peter and I have been responding to approach #1, arguing that it yields predictions dramatically different from what we observe in simple experiments.

But perhaps you had been thinking in terms of approach #2 all along? In this case, our criticism would still, I think, apply, but you might be able to argue that SLE is only an emergent property of Hamiltonians that have property X, and our examples don’t have property X. To which I would argue…, well, let me first pause to see what you think.

Aram’s question was indeed very good precisely because mathematically the answer is obvious. The SLE form a special case of general Lindblad evolutions so, of course, they do fit into the consensus picture of quantum noise: that there is a Hamiltonian with terms for the system, the environment, and then terms coupling them. The tension is not with the mathematics but with the interpretation and especially what the word “environment” refers to. (This was item #14 in the list of outlined issues of our debate.) In any case, the SLEs, and related classes of quantum systems that I consider, are all within the “consensus picture” as Aram defined it.

Hi Gil,

You say “they do fit into the consensus picture of quantum noise: that there is a Hamiltonian with terms for the system, the environment, and then terms coupling them.”

If this is so, then for some SLE describing a simple system, you should be able to explicitly give me the Hamiltonian with the terms for the system, with the environment, and for the system-environment coupling. You should be able to trace out the environment, after assuming that it mixes rapidly, and explicitly show how this gives me the Lindblad equation.

Physicists can do this for many simple systems, and get Lindblad equations which match reasonably well with experiment. From your discussions, you cannot do this.

Dear Peter, one way to think about my time-smoothing operation is as as a filter. My conjecture is that if you have a quantum system and if when you apply this filter the resulting tilde E_t has nothing to do with your original E_t, then you should regard your quantum system as unrealistic. So perhaps it is best to start with an explicitly given Hamiltonian with the terms for the system, with the environment, and for the system-environment coupling, (like the one proposed in the recent paper by Preskill regarding quantum fault tolerance,) and then once this explicit description is already given my condition will exclude as unrealistic a large number of such quantum systems based on overly exotic “term for the system”.

Two little remarks: First, there is no need to consider only Lindblad evolutions to start with. The conjecture that the system is well approximated (in my sense) by the smoothed version applies for more general systems. Second, indeed my condition is implicit.

This SLE-as-filter idea makes sense. In that case, should we think of it as similar to the “QC is impossible” conjecture? i.e. the conjecture is that many-body physics will, for perhaps many different reasons, always result in an evolution that can be described (1) by SLE, and/or (2) by something without as much entanglement as we would need for Shor’s algorithm.

Note that there is one sense in which all time evolutions can be described by SLE. Simply put all the time evolution into the “noise” terms E_s, and say that the “intended” time evolution corresponds to the Hamiltonian H=0. So I really think you have to address this concern about SLE requiring knowledge of our intentions in order to be well-defined.

However, using the naive definition of intended vs. unintended, I believe that my example (of a very noisy bit with a controllable coupling to a less noisy bit) shows a situation in which SLE is less realistic than the conventional picture, and indeed contradicts experiments. I think that Peter’s does too.

Aram: “Simply put all the time evolution into the “noise” terms E_s, and say that the “intended” time evolution corresponds to the Hamiltonian H=0.”

Aram, to avoid this and similar issues, in all my conjectures I consider the E_s as a POVM-measurement, and, in addition, I mainly consider approximately-unitary evolutions.

“Aram, to avoid this issue, in all my conjectures I consider the E_s as POVM-measurement, and in addition I mainly consider approximately-unitary evolutions.”

I don’t understand either of these points. It’s worth mentioning here that both the Kraus operator formalism (which is the generalization of POVM measurement that also models the output quantum state instead of assuming that it is discarded) and the unitary formalism are fully general and can describe all quantum evolutions. For example, {E_1, …, E_k} are a collection of valid Kraus operators if E_1^dag E_1 + … + E_k^dag E_k = I. But we can always take E_1 to be unitary and E_2 = … = E_k = 0, and thereby perform unitary evolution within this formalism. More complicated examples can easily make an effective unitary evolution that passes whatever simple criteria you want for being “noisy”, e.g. a unitary gate on the qubit you care about, together with some depolarizing noise on the qubit you don’t care about. So you cannot look at a process and say it is unitary or that it is noisy. Of course, you can say it involves interaction with an environment, but this depends on your definition of environment. Many things that we call “unitary” could also be called noisy; e.g. if our qubits are nuclear spins and we use RF pulses to control them, then the coil delivering the pulse could contain degrees of freedom that are considered to be “environment”.

Anyway, this might not address what you are actually talking about. My concern is that literally the definition of SLE contains a reference to our intentions. Maybe you could address it by writing a definition of SLE that does not mention our intentions? And in the process you might rule out my argument that all evolutions are trivially in the SLE class.

Let us fix a Hilbert space and a time window. On this Hilbert space let’s consider a unitary evolution which you can think about as the intended evolution. The SLE conjecture will say that the evolution will be subject to noise which is well approximated by a smoothed-in-time POVM measurement where the rate is lower bounded by a certain intrinsic parameter of the unitary evolution. (More specifically the rate in a time interval is lower bound by a non-commutativity measure of the evolution in this interval.) On top of this you can have additional standard noise. (Look at the last post of our debate.)

The interesting consequence from this conjecture or line-of thought is that smoothed-in-time noisy quantum processes, and in particular, smoothed Lindblad evolutions, are relevant for modeling realistic noisy quantum systems. Let me mention that the most interesting aspect of my work is not to refute quantum computing, via some no-go theorem of some sort, but rather to base interesting models of noisy quantum systems on the idea that quantum fault tolerance fails.

What you write leaves open the possibility that my intended unitary evolution is “nothing happens” but the noise process is “whatever quantum dynamics I secretly intended in the first place.” In this case, the process meets your definition of SLE without introducing any new (e.g. multiqubit) noise processes.

Aram, I think that I lost you. First, are you saying that every unitary evolution can be regarded as a small perturbation described by POVM-measurement of the identity evolution? And if the answer is yes, what precisely do you learn from this?

My point is that the POVM formalism and the unitary formalism are each, in a sense, universal. It’s like describing classical computation using CAs or Turing machines; each can simulate the other. Or like thinking of matrices as linear operators, or as rectangles full of numbers. They are just two ways of describing the same thing, and there is no assumption that anything is a small perturbation.

Thus, there is no canonical way to divide the evolution of the system into a “unitary part” and a “POVM part.” Yet the definition of SLE treats these parts differently.

I made this objection before, but it becomes more important when we try to do any kind of concrete calculation with, or make any kind of concrete statement about, SLE.

Hi Aram,

I realize that i have actually not stated what I believe myself on the issue of QC and fault tolerance, only what I think Gil have been saying.

I believe that in time universal quantum computers will be built. The engineering challenge is a non-trivial one, but I don’t see any fundamental obstacles against it.

That the machinery will affect the form of the noise, which I believe is Gil’s central thesis, looks obvious, and true for classical computers as well, but I don’t see any physical reason for why this feedback should as strong, destructive, and selective, as these conjectures imply.

At the same time I think the long Aram-Kalai debate has been useful, and interesting, since it has put a finger on some of the assumptions underlying error correction and the noise models.

Klas, it is worth mentioning that a standard assumption of FTQC is that the machinery affects the form of the noise, although of course it is always possible that the assumptions about this are not sufficiently pessimistic. But it’s not like this point was never considered before. It is in fact pretty central to many of the constructions.

Klas have made an excellent job in describing one crucial element of my point of view, and this is especially impressive given that he does not agree with my overall assessment. Aram is correct in saying that also in some standard models the machinery affects the form of the noise. The main problem (or the “trivial flaw” as I refer to it) is not that these models disregard the machinery or that they are too optimistic, but rather that these models are heavily based on the machinery being a general-purpose quantum computer. If you take this for granted, than you abstract away too much of the physics and then the failure or success of quantum fault-tolerance is essentially narrowed down to a single parameter.

I don’t understand your argument. If we try to apply the same reasoning to classical machines, doesn’t it work for them, too. If we have a noisy, special-purpose classical computer, we can’t use the fault-tolerance subroutine on it, and so when it gets large enough, it won’t work. Why is this an argument against general-purpose classical computers?

Or, maybe more to the point, what makes general-purpose quantum computers impossible but general-purpose classical computers possible?

“I don’t understand your argument.”

Peter, as said in the lecture my purpose is to describe a viable alternative for the possibility of quantum computers. To make it into an argument much more theoretical and empirical work is needed. (For some special cases, like topological quantum computing, I do offer an actual argument. ) Indeed, Klas understands but don’t “buy” this fragment of my proposed alternative picture.

“If we try to apply the same reasoning to classical machines, doesn’t it work for them, too.”

In the classical world sometimes this reasoning applies and sometimes it does not. If your computer (or brain) is used for composing a document and you suddenly decide to use it for computing the 10^8 zero of Riemann’s zeta function then this can be done by the same device. But If you ride your bicycle around the block and suddenly decide to take them for a manned mission to Mars, then you better replace your device. I agree that a quantum computer sounds analogous to a digital computer and not to a bicycle (After all, it is called “quantum c o m p u t e r.”) But we have to examine both possibilities.

“Or, maybe more to the point, what makes general-purpose quantum computers impossible but general-purpose classical computers possible?”

This was a central part of my debate with Aram. (See this post and this post.) My conjectures largely dodge this point, but I certainly discuss it in my papers and we did gain some interesting insights about it. Understanding the major difference between quantum computation (and fault tolerance) and classical computation (and fault-tolerance) is a major challenge to believers and doubters alike.

Aram, this is indeed a line of thought you expressed also in our debate. It is related to the thought that there is no objective meaning to “noise”. This is certainly an interesting foundational issue but I don’t see its particular relevance here. What is your conclusion, Aram, from the mathematical equivalence between the POVM formalism and the unitary formalism? What does it say, in your opinion, about open quantum systems? Or about quantum fault-tolerance?

When we fix a Hilbert space then there is a distinction between pure states and mixed states and between a unitary evolution and a noisy quantum evolution. We can talk about perturbations to unitary evolutions, and we can talk about noisy evolutions that represent approximately pure state for every time. (I referred to such evolutions as “approximately pure.”) Indeed let us fix both a Hilbert space and a time interval for the rest of the comment.

“Thus, there is no canonical way to divide the evolution of the system into a “unitary part” and a “POVM part.” Yet the definition of SLE treats these parts differently.”

1) When you restrict the unitary evolution of our world to a small Hilbert space then the restricted evolution is not unitary, This applies, in particular, to attempts to control a quantum system or to describe a quantum system on a small Hilbert space.

2) A reasonable description of such a system is in terms of a unitary part and an error described by POVM-measurement. (It is neither canonical nor the most general type of noisy quantum evolution.)

3) Smoothing-in-time can be regarded as a filter on the space of such noisy quantum evolutions.

4) If you give me a noisy quantum evolution described in terms of a unitary part and the POVM noise then I have a mathematical criterion to test if your evolution is realistic. In order to be realistic the filtered version of the evolution should be close (in a sense that I described) to the original evolution.

5) In view of this criterion I propose to study smoothed Lindblad evolutions as an interesting class of realistic noisy quantum evolutions.

The mathematical equivalence between the unitary and Kraus-operator (aka POVM) formalisms to me means that we cannot meaningfully base other definitions on distinctions between them.

Note that fixing the Hilbert space and the time interval are examples of looking at our intentions, unless your claim is that this holds for all Hilbert spaces and all time intervals, which I guess it is.

Let’s say that we do this, though. Fix a Hilbert space of our n qubits and call everything else the environment. Our control pulses are never perfectly unitary, as they always involve applying some kind of electromagnetic field controlled by noisy electronics. So we never apply a unitary. All we do is apply different kinds of noisy operations, some approximately unitary, some far from unitary. The set of unitary operations is measure zero in the space of all operations, just like low-rank matrices have measure zero among all matrices. There is no way a theory that makes predictions about observable outcomes can be sensitive to this.

Let me go back to two central issues raised by Peter.

1) Can you have smoothing on all time scales, and is my theory viable without it?

This was Peter first concern both in my lecture and his early comments (e.g. here). I thought that this is intriguing idea but I don’t see why it is necessary since I was talking all along about approximations and it is possible for a quantum system to be well approximated (in my sense) by an SLE for every time interval. I tend to agree with Peter that if you fix the Hilbert space you cannot have smoothing on all scales. (Note that the Hilbert space is also a “window” of a sort, just like the time window, and different scales are also represented by different Hilbert spaces.) Having a model with many-scales forms of smoothing when you consider both different time scales and different Hilbert-subspaces may be possible and quite interesting!

2) Can you determine the “correct” smoothing-scale from the evolution itself?

Ahh, this was a revised question that Peter asked. And here, I briefly said that I overall agree. (Both Peter and Aram are quite tough, Peter disagrees with me agreeing with him, and Aram criticizes me for referring to one of his questions as excellent. 🙂 ) So let me explain the matter less briefly.